Engineering

Written by Memori Team

Introducing Memori Cloud: Fully Hosted SQL-Native Memory Layer for AI Agents

AI agents without memory are still stateless. They rely on prompt history and repeated context injection, which increases token spend, adds latency, and makes experiences inconsistent across sessions.

Today, we’re launching Memori Cloud, a fully hosted version of Memori - our SQL-native memory layer for AI applications, agents, and copilots. With Memori Cloud, developers can add persistent memory to production AI systems in minutes using a single API key, without provisioning databases or managing storage infrastructure.

Why persistent memory matters for AI agents

Prompt history is not memory. For production AI agents, you need a system that can capture interactions, extract structured knowledge, and retrieve the right context later.

Memori Cloud helps teams build AI agents that can:

- remember facts, preferences, skills, and relationships across sessions

- reduce prompt stuffing and, in the right workloads, cut inference costs by up to 98%

- surface the right memories and let stale ones decay

- inspect what was stored and what was recalled

Because Memori is SQL-native, memory is structured, durable, and auditable - a better fit for real production workloads than ad hoc prompt management or opaque memory stacks.

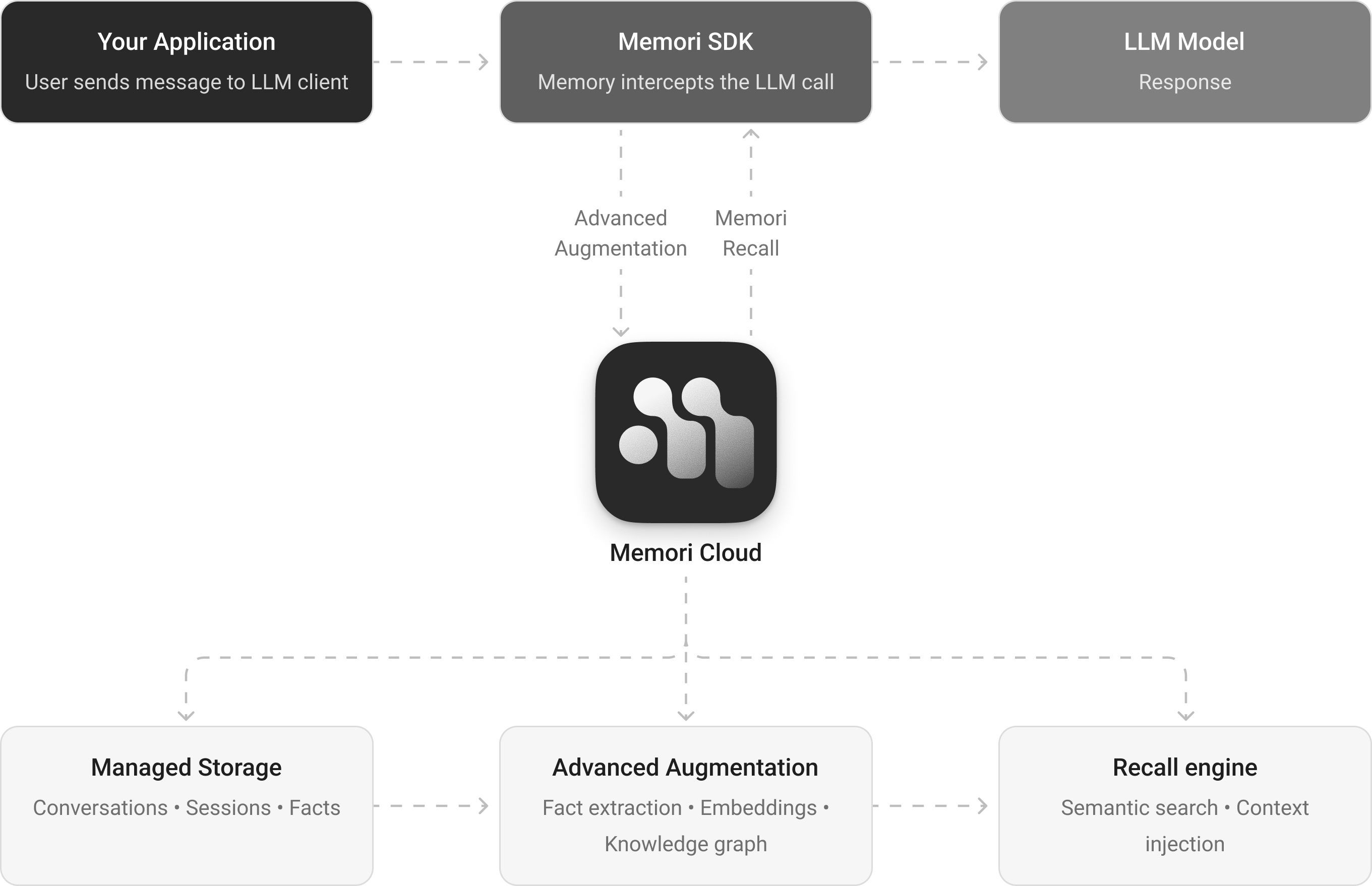

How Memori Cloud works

Memori Cloud is designed to be fast on the request path and smarter in the background:

- Synchronous capture stores LLM conversations as they happen

- Asynchronous augmentation extracts facts, preferences, skills, and relationships

- Semantic recall ranks and injects the most relevant memories into future prompts

- Memori Cloud decay ages and deprioritizes facts based on recency and access frequency, so stale context doesn't crowd out what's actually relevant

- Knowledge graph construction turns extracted triples into connected memory

That architecture gives developers persistent memory without slowing down the user experience.

What ships at launch

Memori Cloud launches with:

- fully managed memory infrastructure

- semantic recall for future prompts

- knowledge graph construction

- a Cloud Dashboard for analytics and debugging

The dashboard includes:

- Memories to inspect memory rows, subjects, retrieval counts, and graph relationships

- Analytics to track created and recalled volume, cache hit rate, sessions, users, and quota usage

- Playground to take Memori for a spin and watch how we create memories and construct the graph in a SQL native way

Same SDK, different deployment model

Memori Cloud and Memori BYODB use the same SDK. That means teams can start with the fully hosted option for speed, then move to BYODB, VPC, or on-prem deployments if they need more infrastructure control.

| Option | Best for teams that want: | Setup |

|---|---|---|

| Memori Cloud | the fastest path to production minimal ops overhead managed storage and built-in observability | set MEMORI_API_KEY initialize Memori() |

| Memori BYODB | full control over storage and infrastructure your own database and deployment boundary self-managed operations | Provide conn=... run the storage schema build operate memory on your infrastructure |

One practical note: memories do not automatically transfer between Cloud and BYODB environments, so plan migrations accordingly.

Ship Memory-Native AI, Faster

If you’re building AI agents, copilots, or LLM applications that need long-term memory, Memori Cloud gives you a production-ready memory layer without the ops overhead.

You get persistent, LLM-agnostic memory, semantic recall, observability, and flexible deployment options - all built on the same Memori primitives.

Start Now

- Sign up: app.memorilabs.ai

- Cloud docs →

- BYODB docs →